Companies usually leave GCP not because of one big problem, but because of a combination of three things: the bill becomes less predictable, platform limitations begin to slow down work, and the Google ecosystem itself makes the project increasingly dependent on its own logic.

It usually comes down to three reasons:

- The business notices that it is paying not only for virtual machines, databases, and storage, but also for networking overhead, traffic, supporting services, and “small things” that unpleasantly add up to a large amount.

- The team starts running not into the idea or the product, but into quotas, limits, and platform rules.

- Then it becomes clear that the project is already too deeply tied to services and processes inside Google Cloud — and leaving that model will be much harder than it seemed at the start.

This is not only theory. Google Cloud has separate pages for quotas, network pricing, and the overall cost model — and the very presence of so many rules and pricing layers already says a lot about the nature of the problem.

Below, we will look at when GCP stops helping and starts getting in the way, where the budget begins leaking unnoticed, how platform limitations affect the team’s pace, and what practitioners, reviews, and market signals say about all of this.

When GCP Stops Helping and Starts Getting in the Way

Usually, this does not happen in a single day. At first, the platform simply stops feeling like a convenient background for the project.

At some point, the bill becomes less transparent. At some point, a new task suddenly brings with it another layer of networking logic, another limit, or another cost item. And at some point, the team can no longer quickly answer a simple question: what exactly is the business paying for, and what is it actually getting in return?

This is especially noticeable for a small or mid-sized project. Imagine an on-demand bodyguard hiring service — logically, something like Uber, except instead of a ride, the user requests security, chooses the escort format, number of cars, number of staff, and working time. A site or app like this quickly accumulates personal accounts, requests, geolocation, notifications, a client database, internal roles, logging, files, payment logic, and most likely heightened requirements for reliability and response speed.

At the start, GCP may look like a perfectly normal environment for such a project. But as the business grows, it starts noticing that the platform itself is living a more complicated life than the current task actually requires. And the irritation comes not from one major failure, but from the fact that more and more often the team has to think not about the product, but about the rules of the environment around it.

Here is what that looks like:

| What starts to become irritating | How it feels in the business |

| The bill becomes harder to read | It becomes harder to explain what exactly is driving costs up |

| Quotas and limits surface at the wrong moment | The team runs into the boundaries of the environment rather than the idea itself |

| The network layer starts living a life of its own | Traffic and infrastructure overhead demand more attention and money than expected |

| Support stops being a “small detail” | Even the basic level of help starts becoming part of the economic equation |

| The architecture accumulates service dependencies | Any change starts requiring more time and more caution |

For a service that hires security personnel, this is especially sensitive. The user is not just browsing a catalog. They submit a request, wait for confirmation, may provide sensitive data, and count on speed — they are not prepared to figure out why the platform suddenly introduced delays, limitations, or strange behavior under load. So even if the problem looks “technical,” it is already hitting trust in the service itself.

But here it is important not to confuse fatigue from the platform with a real reason to leave.

Sometimes GCP really does stop matching the needs of the business. And sometimes the problem is something else: the project has grown, costs and dependencies have become more complicated, and the team has gone too long without cleaning up the operational mess that has built up.

That is exactly why the next question matters more than emotions: at what point does the budget start leaking not through one big hole, but through dozens of small ones?

Cost: Where GCP Starts Becoming Frustrating for More Than Just One Line on the Bill

What the business pays for besides the “core infrastructure”

On paper, a project usually counts virtual machines, databases, and storage. In practice, they are quickly joined by data transfer, networking charges, backup scenarios, logs, service operations, and support.

With Google Cloud, this is visible even in the official documentation structure. The platform has separate pages for network pricing, quotas, and support, while the pricing overview separately highlights budgets, alerts, and cost-control tools. That alone shows quite clearly that the bill here is not made up of one layer of “core infrastructure,” but of several layers at once.

This is especially easy to see in a simple breakdown:

| What the team sees as the main cost | What later starts eating into the budget |

| Virtual machines and databases | Data transfer, network layer, logs, backups, service traffic |

| Storage as a “clear” line item | Requests, operations, data movement, and related activity |

| Basic environment support | Paid support, if the project already needs faster response times |

| One service as the unit of calculation | The entire cloud overhead built around it |

But the table shows only the top layer.

In practice, the most unpleasant part starts when the business no longer just feels the bill growing, but can no longer quickly and calmly explain which exact part of platform life is pulling the budget upward.

Why the Bill Does Not Break Suddenly, but Creeps Up Quietly

This is the stickiest part of GCP costs. The budget rarely “explodes” because of one line item. Instead, it spreads out through a set of small, seemingly normal expenses that do not look dangerous for a long time.

For example, in Google Cloud, Premium Tier is used by default for data transfer unless Standard Tier is selected separately. And Standard Support starts at $29 per month or 3% of monthly cloud charges, whichever is higher. As the project grows, even things like this stop being minor details and become part of the overall economics of the platform.

That is why the bill does not usually break all at once — it creeps upward. While the project is small, this may still be manageable. But as it grows, the business increasingly faces not one “wrong” price, but the feeling that the entire cost model has become harder to control.

And from here, the next logical step is to look at the moment when the problem stops being only about money and starts running into the limitations of the platform itself.

Limitations: When Platform Rules Start Dictating the Pace

When quotas and limits start hurting growth

While the project is small, these limitations may barely get in the way. But as the project grows, they start feeling less like a technical detail and more like a business factor.

In Google Cloud, quotas can exist not only at the project level, but also at the folder, organization, region, and zone level. For the business, that means something simple: the more services, teams, environments, and geographies a project has, the higher the chance that limitations will appear not in a calm moment, but exactly when they are least convenient.

For a service like an on-demand bodyguard hiring platform, this is especially unpleasant. As the business grows, the team wants to add new coverage zones faster, connect more integrations, introduce more internal automation, run more background processes, and build a more reliable network design.

But if, at that moment, the project starts running into limits for networks, load balancers, APIs, or other resources, the issue no longer looks like a “cloud detail.” It starts slowing down the product itself.

Why the Team Runs Into the Environment’s Boundaries, Not the Idea

This is where it starts becoming especially frustrating. The business discusses a new feature, a new market, or a new user flow, while the team responds not with product logic, but with quotas, network limits, a request to increase capacity, and how that request may affect the rest of the environment.

Google Cloud separately notes that most quota-change requests are evaluated by automated systems, and some requests may be denied. In addition, for certain limit-increase requests, prepayment may be required. In other words, the path of “we’ll just raise the quota” is not always instant and not always free.

That is why the platform starts getting in the way not only through money, but through rhythm. The team loses speed not because it lacks ideas, but because the environment itself demands more caution, more planning, and more technical overhead than the business would like.

Against this background, the next topic becomes completely natural: what happens when convenience inside the Google ecosystem gradually turns into dependency on it.

The Google Ecosystem: When Comfort Inside the Platform Turns Into Dependence

Where Convenience Turns Into Lock-In

Dependency usually does not emerge at one single big point, but through a dozen small decisions that each look perfectly reasonable on their own.

A service stores data in one model. Background processes depend on specific queues. Access is built around the current logic of roles and service accounts. Monitoring and alerts live in familiar tools. Deployment automation, network rules, and the internal logic of the environment are already tailored to the platform as well.

Because of this, a project gradually stops being portable “by default.” It becomes portable only after rework.

This is easy to show in a simple table:

| What initially looks like a convenience | What it turns into when you try to leave |

| Ready-made services around the application | They have to be replaced or rebuilt in the new environment |

| A familiar model of roles and access | Access has to be rebuilt from scratch according to a different logic |

| Observability and alerts “out of the box” | Monitoring and operational workflows have to be rebuilt almost from scratch |

| Automation inside the platform | Scripts, pipelines, and runbooks start depending on the old environment |

| Platform-native networking and routing | The new setup no longer matches the way the project used to live |

That is why dependence on the ecosystem rarely feels like “someone is holding us in place by contract.” More often, it looks like this: the team built a convenient but overly platform-dependent way of working, and is now paying for that tight coupling with the complexity of leaving.

Why Leaving Later Costs More Than It Seemed at the Start

While the project is running inside one environment, this convenience is almost invisible. But when the company tries to leave, it becomes clear that not only data and services have to move. Along with them, the team also has to pull out roles, access rights, network logic, familiar operational workflows, and everything that previously lived inside the platform almost “by itself.”

For the business, this is unpleasant for a simple reason. On paper, it looks like infrastructure needs to be moved. In practice, the company also has to rebuild the way of working around that infrastructure.

That is why leaving GCP often looks less like relocation and more like a partial redesign of the system. The deeper the project has lived inside Google’s service logic, the stronger this effect becomes.

And from here, the next natural step is to look at what practitioners, public cases, and real market signals say about all of this.

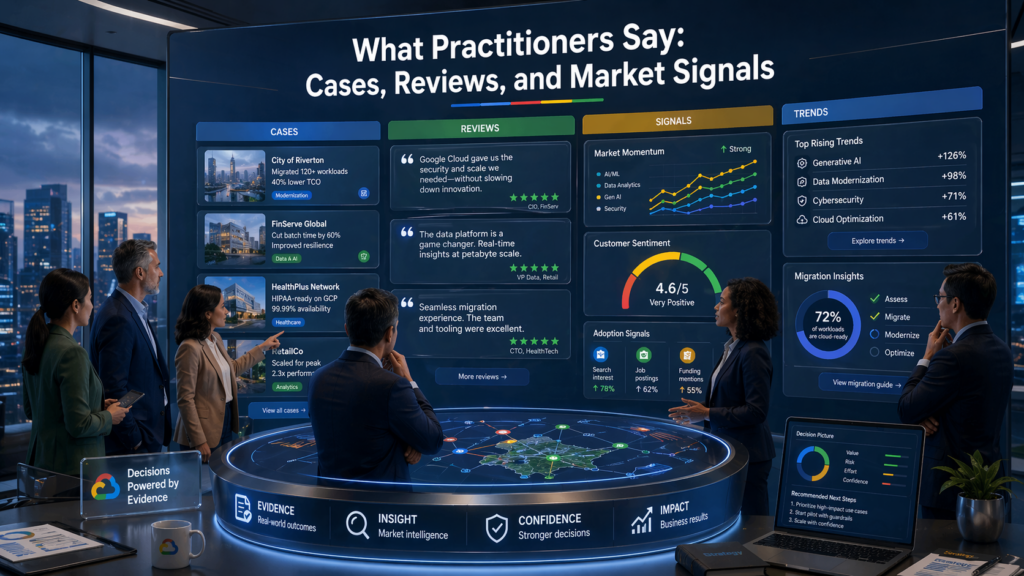

What Practitioners Say About It: Cases, Reviews, and Market Signals

If you look beyond documentation and pricing pages, the picture becomes more vivid. In practice, the conversation about leaving GCP usually revolves around three things: unpleasant billing surprises, limitations that start getting in the way of work, and the feeling that the project has grown too deeply into the Google ecosystem.

Bottom-up public signals show this quite well. In Reddit discussions, people complain not only about “expensive cloud in general,” but about very specific stories: unexpected BigQuery bills, problems with cost transparency, and frustration with quotas that appear during real work rather than in a calm planning stage.

For example, in one post, a user described receiving a bill of around €50,850 after working with BigQuery, while in another, someone mentioned £847 for an experiment connected to a Google-sponsored AI Residency. This is not market statistics, but user-level signal — and it shows clearly where fear of unpredictable costs tends to build up.

The same themes usually appear in such feedback:

- The bill is frustrating not because of one huge generic line, but because of unpleasant surprises in services like BigQuery

- Quotas and limits feel not like abstract platform rules, but like brakes on real work

- Convenience inside the ecosystem gradually starts looking like dependency that is not easy to unwind

But it is important not to overestimate these reviews. Reddit and Hacker News are useful not as strict statistics, but as a layer of real pain points. They do not prove that GCP is “bad for everyone,” but they show well where user frustration begins to accumulate.

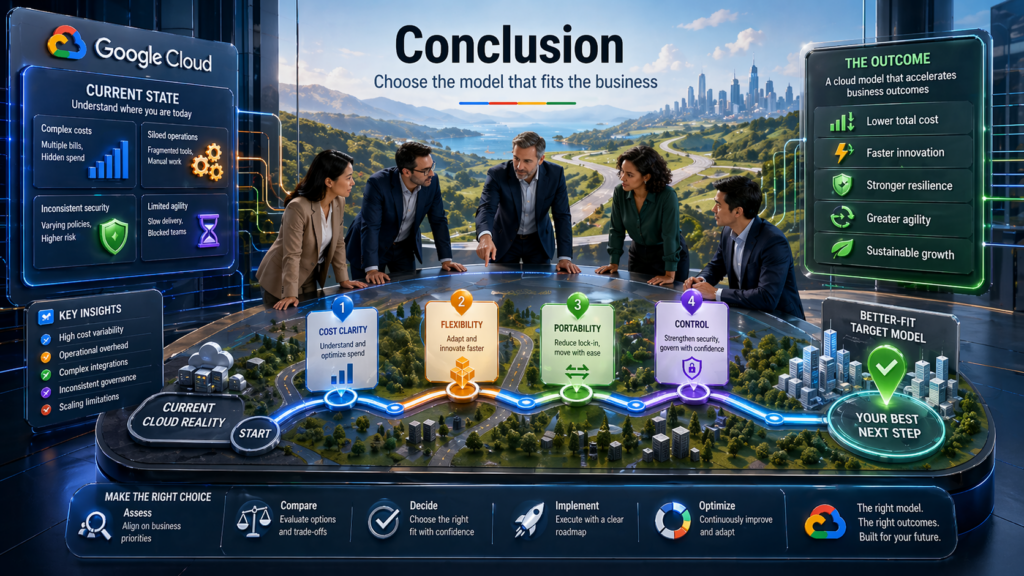

Conclusion

Companies usually leave not because the platform suddenly “breaks,” but because at some point the combination of cost, limitations, and ecosystem dependency stops matching the business’s needs.

While the project is small, this may barely be noticeable. But as it grows, the bill becomes less transparent, quotas and platform rules interfere with the working rhythm more often, and the convenience inside the platform gradually turns into dependency.

This matches both what is visible in Google Cloud’s own materials on quotas and network pricing, and what users write about in discussions around unexpected bills and real-world limitations.

So the final question is better phrased this way: is the problem really the platform — or the way the business has built its current setup around it?

The more honestly the company answers that before migration, the cheaper the next decision will be.

FAQ

If the only reason is price, is that already enough to leave?

Not always. A high bill by itself does not prove that the platform has become a poor fit. GCP has built-in budgets and cost alerts, so some problems can be caught earlier if the business is missing cost control rather than an entirely new infrastructure model.

Which warning sign looks small at first but hurts the most later?

Often, quotas. While the project is small, they are barely noticeable. But Google Cloud directly states that quotas limit the use of many different resources — from APIs to network components — and as the project grows, they can start slowing down not just the technical setup, but the team’s pace.

Is BigQuery the main pain point in GCP?

No. BigQuery is just a visible example because costs there can easily become a surprise for an unprepared team. But users also complain about network costs, overall bill predictability, and small cost items gradually slipping out of control.

Can you tell when the problem is no longer the platform, but your operational discipline?

Yes. One of the most honest tests is to check whether budgets, notification thresholds, and cost controls are configured at all. Google Cloud recommends using budgets and alerts to see in advance when spending starts moving outside expected limits. If this is not in place, part of the frustration may come not from the platform itself, but from weak control.

What makes leaving the Google ecosystem especially unpleasant?

Usually not one “big” service, but a combination of familiar things: IAM, service accounts, network rules, automation, and internal operational workflows. Google Cloud describes service accounts as having their own lifecycle and management rules, which is a good reminder that dependency is not hidden in one button, but in the team’s everyday operating logic.

Is there a strange but useful question to ask before leaving GCP?

Yes: “Do we want less cloud — or just another cloud?” If the company still needs a large service set, a broad platform, and the same class of ecosystem, the problem may not be the model itself, but its current implementation. But if the business wants fewer layers, fewer rules, and more direct infrastructure, then it is not only the provider that needs to change — the approach itself does too.

This conclusion comes from the fact that the main pain points around GCP usually revolve around quotas, network economics, and service coupling, not around one “wrong” VM.

Sources

1. Google Cloud — Quotas overview