Migration from Google Cloud Platform is rarely just a matter of moving data and services out. Usually, it is not one step, but a chain of three stages: first, you need to understand what actually lives in the cloud and what it depends on; then you have to move the data and services carefully; and after that, the system still has to survive its new life on another platform.

In simple terms, the route usually looks like this:

- First, the business needs to understand why it wants to leave at all and what exactly it plans to move.

- Then the project runs into real dependencies, data, services, and the cutover window.

- After launch, it becomes clear whether the new setup is actually convenient for the team — and whether the migration became too expensive in money, time, and effort.

The main mistake is to think that the hardest part begins when data copying starts. In practice, the pain often appears earlier, in dependencies, and later, during cutover and post-launch operation.

For the business, the risk is not only downtime, but also timelines, loss of momentum, rework, and additional pressure on the team.

Below, we will look at what usually pushes companies to leave Google Cloud Platform, what such a migration looks like step by step, and where the project most often starts costing more than expected.

What Usually Pushes a Business to Leave Google Cloud Platform

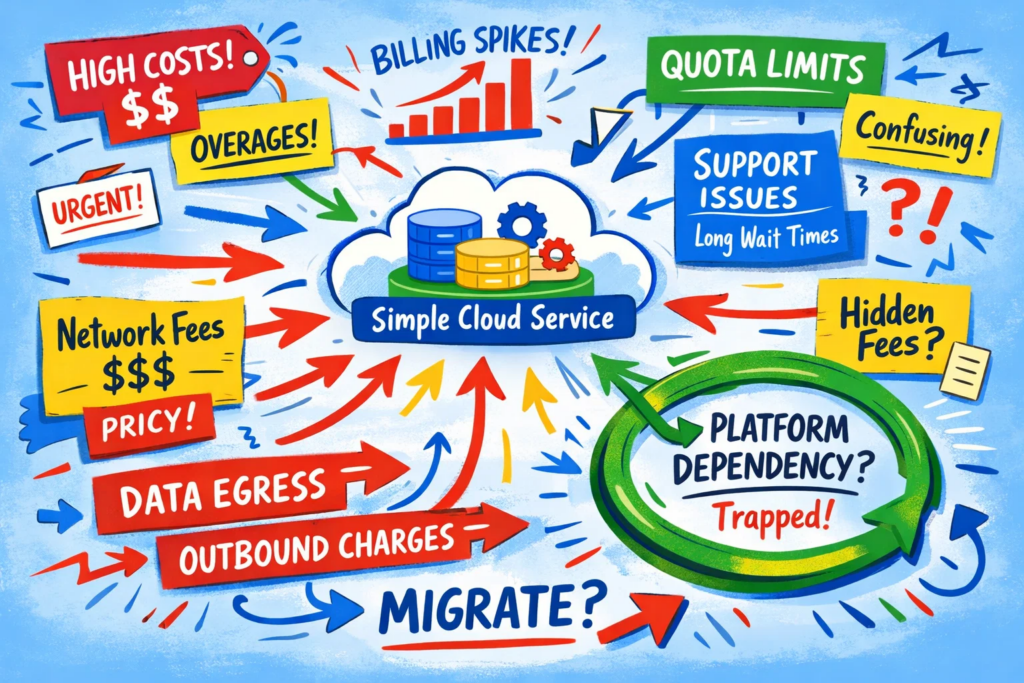

Companies rarely leave major providers — especially Google Cloud Platform — for one single reason. Usually, the decision is made up of several factors at once: the economics become less attractive, certain limitations begin getting in the way, and the platform stops being just a convenient background layer and becomes a noticeable factor in the architecture and daily work.

This is visible even in Google’s own official materials. The platform has separate pages on quotas and limits, network pricing, paid support, and cost management. By itself, that is normal for a large cloud. But it is exactly in these layers that some teams begin to feel fatigue: the project grows, and with it grow not only compute needs, but also the number of limitations, pricing nuances, and organizational dependencies.

Most often, businesses are pushed toward leaving by factors like these:

| What starts becoming frustrating | How it looks in practice |

| Costs become harder to understand | Beyond the resources themselves, network pricing, egress, and side costs become more noticeable |

| Quotas and limits get in the way of growth | The project runs not into the idea itself, but into restrictions across services, APIs, or networks |

| Support stops meeting expectations | For some teams, the cost or quality of support no longer matches expectations |

| The business wants a simpler infrastructure model | What is needed is VMs, networking, storage, and Kubernetes without a large platform layer around them |

| Unwanted platform lock-in appears | Migration starts looking less like a normal platform change and more like an architectural rebuild |

If we look at the official side of the question, Google Cloud does have several typical areas that can push a team to reassess the platform:

- First, quotas and limits: Google directly states that quotas restrict resource usage and apply to many different types of services — from APIs to network components and load balancers.

- Second, network economics: Google Cloud has separate pages for network pricing and tiered egress, and Premium Tier is enabled by default for data transfer.

- Third, support: Standard Support starts at $29 per month or 3% of monthly charges, and higher-tier plans can quickly become a noticeable cost item.

At the level of user feedback, the picture is similar, just phrased less diplomatically. In Reddit discussions, people regularly complain about unexpected bills, delays or weak support quality, complex interfaces, and the overall heaviness of the platform for not very large projects. This is not market statistics or ultimate truth — it is anecdotal signal — but it does show where real users often feel frustration.

But here it is important not to confuse cause and symptom. Sometimes the business really has outgrown the current model and needs a different balance between price, control, and complexity. And sometimes the problem is not the platform itself, but that the project grew for too long without proper cost discipline, architecture, and dependency management.

That is why the first question is not simply “should we leave or not?” but what exactly has Google Cloud Platform stopped doing well enough for this business. The answer to that question determines everything else: whether migration will be a reasonable step or just an expensive attempt to change the scenery.

Stage One: First Understand What Actually Lives in the Cloud

At this point, migration still tends to look manageable. It may seem that all you need to do is collect a list of services, estimate the data volume, and choose the new platform.

But this is exactly where projects most often underestimate the real scope of work.

The problem is that the business usually remembers only the top layer: the website, the API, the database, storage, and maybe a couple of background jobs. Underneath that, however, there is almost always a second layer that gets remembered too late: access rights, service accounts, network rules, backups, observability, queues, DNS, alerts, schedules, scripts, and all the small things the project has been relying on “in the background.”

That is why the first stage is not just inventory for the sake of a checklist. It is an attempt to answer honestly: what exactly needs to be migrated, what can be rebuilt, and what should not be carried into the new environment at all.

Where Dependencies Are Usually Underestimated

Most often, the project does not misjudge the number of virtual machines or buckets, but the number of connections between them.

One application can pull along a database, object storage, service roles, internal addresses, access rules, logging, nightly jobs, webhooks, and integrations with external systems. On a diagram, it may look like “one service.” In a migration, it becomes a chain of several dependent pieces that cannot safely be moved separately without risking breakage.

This is especially easy to underestimate in small and mid-sized projects. Suppose you do not have a huge platform, but a fairly ordinary service: a website, a user account area, file uploads, a request form, and a few background processes. On paper, it may seem that there is not much to get tangled in.

In practice, different things start appearing:

- Files live in one place, while links to them depend on the current delivery logic

- The database is tied to background jobs and notifications

- Access rights are built around the current roles and service accounts

- Monitoring and alerts are configured as if this environment will live forever

- Some operational scenarios depend on old scripts that are remembered only at the last moment

That is exactly why the assessment stage often determines whether the migration will be controlled or chaotic. If the dependencies are not mapped in advance, the project then starts moving almost blindly.

Stage Two: Move Services and Data Without Pretty Illusions

After the assessment stage, a dangerous feeling often appears: the hardest part is already behind us. The service map has been created, dependencies are more or less understood, data volumes have been estimated — so now all that remains is to move everything carefully.

This is exactly where the plan most often collides with reality.

On paper, migration looks linear: first the data, then the services, then the cutover. But in a live project, these pieces rarely move so neatly. Data has to be not merely copied, but transferred without loss or drift. Services have to be not merely launched, but checked to ensure they behave in the new environment as expected. And the switch itself almost never comes down to pressing one button.

The closer the project gets to cutover, the more obvious one simple fact becomes: migration is not only about moving things, but about synchronizing two worlds — the old one and the new one — for some period of time.

Why the Cutover Window Is Almost Always More Complicated Than Expected

The most stressful part of migration usually does not begin when data is being copied. It begins when the old and new environments have to meet at one point.

Before that, everything can still be treated as preparation. After that, the real business risk begins: some users may still be going through the old path, some traffic may already be reaching the new setup, and the team has to make sure that data, access rights, and service behavior do not drift apart at the worst possible moment.

At the start, the cutover window often looks short and almost purely technical. In practice, it stretches because of very ordinary things:

- Data has changed again after the first synchronization

- The new environment behaves differently under load than it did in tests

- Some integrations were not checked fully enough

- DNS, routes, or external dependencies converge more slowly than expected

- The team needs not only to switch over, but also to quickly understand whether everything is actually working properly

That is why cutover almost always turns out to be more complex than it looks in the plan. It is no longer just an engineering operation, but a short period in which the project is especially vulnerable both technically and organizationally.

And right after it begins the third stage, which is no less uncomfortable: the new environment is already live, but the business still has to understand how convenient and how expensive it will now be to live with it.

Stage Three: Relaunch Everything and Avoid Losing Control

By this point, the team is tempted to breathe out. The data has arrived, the services are up, and the cutover window has been survived — so it feels like the hardest part is already behind them.

But in practice, this is often where the second part of the migration begins. It is not as loud, but it is much stickier.

Now the task is not simply to keep the service online, but to understand whether the new environment is actually comfortable to live with. How quickly can the team investigate incidents? Are alerts clear and useful? Is it easy to deploy releases, roll back changes, and handle normal operational tasks? Or will it turn out in a couple of weeks that the project has technically moved, but day-to-day work has become heavier?

This usually shows up like this:

| What seems completed | What becomes clear only after launch |

| The service is running | Some scenarios behave differently under real load |

| Monitoring is configured | There are too many signals, too few signals, or they go to the wrong place |

| The release process is assembled | The team spends more time on manual checks and adjustments |

| Access has been granted | Permissions, roles, and internal boundaries do not work as conveniently as before |

| Migration is formally closed | The team spends a long time cleaning up small consequences of the move |

This is where it becomes clear whether the migration was truly manageable or whether the project was simply dragged across the finish line through stress.

If the new environment gives clearer operations, better control, and does not force the team to live in a constant state of manual adjustment, that is a good sign.

If not, the business quickly starts paying not for the move itself, but for the life after it. And that naturally leads to the final part of the article: what this kind of migration risks for the business beyond purely technical problems.

Where the Business Pays the Most for This Kind of Migration

By this point, the technical side is already more or less clear. But for the business, the main question is usually not “can we move the service?” but what the move will cost in money, timelines, and lost momentum.

Suppose you have a small service with monthly revenue of €12,000–15,000. The current cloud infrastructure costs, say, €1,400 per month, and the team believes that on a new platform it can fit into €900–1,000. On paper, the savings look attractive: around €400–500 per month.

But then the part that is hard to see in the first calculation begins.

| What the business sees first | What has to be counted in reality |

| €500/month in savings | Plus 2–3 months of paying for both the old and new environments |

| “Technical migration” | Team hours for migration, testing, rewiring, and validation |

| Migration as a one-time task | Lost momentum on other tasks and releases |

| A cheaper new platform | Risk of errors and performance drops during cutover |

In the end, the picture may look like this:

- €1,000–1,500 — temporary coexistence of the two environments

- €2,000–4,000 — team hours for migration, checks, and rework

- €500–1,500 — delays, repeated migration windows, and small fixes

- Additional losses — if requests, sales, or support quality drop during cutover

So a service that was supposed to “save €500 per month” may first consume €5,000–8,000 just on the transition itself.

And that already changes the conversation. Such a migration will not pay off in one month, or even in two. It will start making sense only over a longer period — and that is in a calm scenario where the project has not suffered a serious failure, dragged out deadlines, or pulled too much attention away from the team.

That is why, for the business, the main migration risk often lies not in the new platform itself, but in the gap between expected savings and the real cost of transition.

Conclusion

If you look at this kind of migration from the business side, the question usually comes down not to the move itself, but to its cost.

Not only the cost of the new infrastructure, and not only the potential savings after launch.

The real cost sits between those two points: in timelines, double payment for the old and new environments, team hours, rework, migration windows, and the loss of momentum on the core product.

That is why migration from Google Cloud Platform cannot be evaluated properly by the logic of “it will be cheaper there.” For the business, that is not enough.

A proper calculation begins when you compare not only the monthly bill before and after, but the entire path between them. If the new setup later provides clearer economics, better control, and a more convenient operating life, then the transition can still be considered meaningful.

If not, migration quickly turns into an expensive project that consumes money, team attention, and months of work — while giving back less than it promised at the start.

FAQ

Is it really difficult to move a project away from Google Cloud Platform without rework?

Often, yes. Even Google’s own materials describe migration not as one step, but as discovery, assessment, planning, and migration waves. That is a good sign that the problem is usually not one VM, but the relationships between applications, data, and infrastructure.

Why do dependencies so often appear too late?

Because at the top level, the project looks simpler than it really is. In Migration Center, Google separately recommends cataloging workloads, mapping them to infrastructure, and analyzing dependencies before the migration starts. Without that, the team can easily end up moving not a system, but a set of poorly connected pieces.

Is data copying the most stressful moment?

Not always. For large data sets, Google describes separate transfer strategies and notes that cloud-to-cloud migrations can use Storage Transfer Service. In projects where downtime needs to be minimized, the bottleneck is often not the first copy itself, but cutover, synchronization, and final switching.

Can quotas and limits really disrupt the migration plan?

Yes. Google Cloud quotas limit the use of different resource types, including APIs, networking, and compute. This does not necessarily break the whole migration, but it can easily disrupt timelines, estimates, and launch order if those limits appear too late.

What is usually underestimated after launch on the new platform?

Most often, not the launch itself, but the life after it: releases, alerts, quotas, operations, and the team’s everyday workflows. Formally, the migration may be complete, but operational load and manual work can keep following the project long after cutover. This fits well with the fact that Migration Center focuses on planning, grouping, and migration waves — not only on the launch moment.

Is there a migration risk that looks small but hurts the most?

Yes: an overly optimistic estimate of the work involved. If the team underestimates dependencies, data volume, network limitations, or quotas, the project starts losing not only time, but also control. Very often, the most painful overruns are built from exactly those “small things.”

Sources

1. Google Cloud — Migration Center overview

2. Google Cloud — About migration planning

3. Google Cloud — Plan your migration waves

4. Google Cloud — Cloud Quotas overview